Trust Is the New Energy

Why Optimization Without Meaning Is Hollowing Civilization

Compute per watt. Brains at 20 watts. GPUs at 700. Data centers burning megawatts to model probability.

The future, we’re told, is about efficiency. More intelligence per joule. Better substrates. Neuromorphic chips. Optical networks. In-memory compute.

And yes, energy matters. Everything reduces to thermodynamics eventually.

But energy isn’t the true bottleneck.

Trust is.

Energy powers systems. Trust powers coordination. And coordination is civilization.

We Optimized the Wrong Thing

Modern civilization is not collapsing because we lack compute.

We have more processing power than any generation before us. Global connectivity. Real-time data. Algorithmic decision systems. Predictive modeling at planetary scale.

What we lack is coherence.

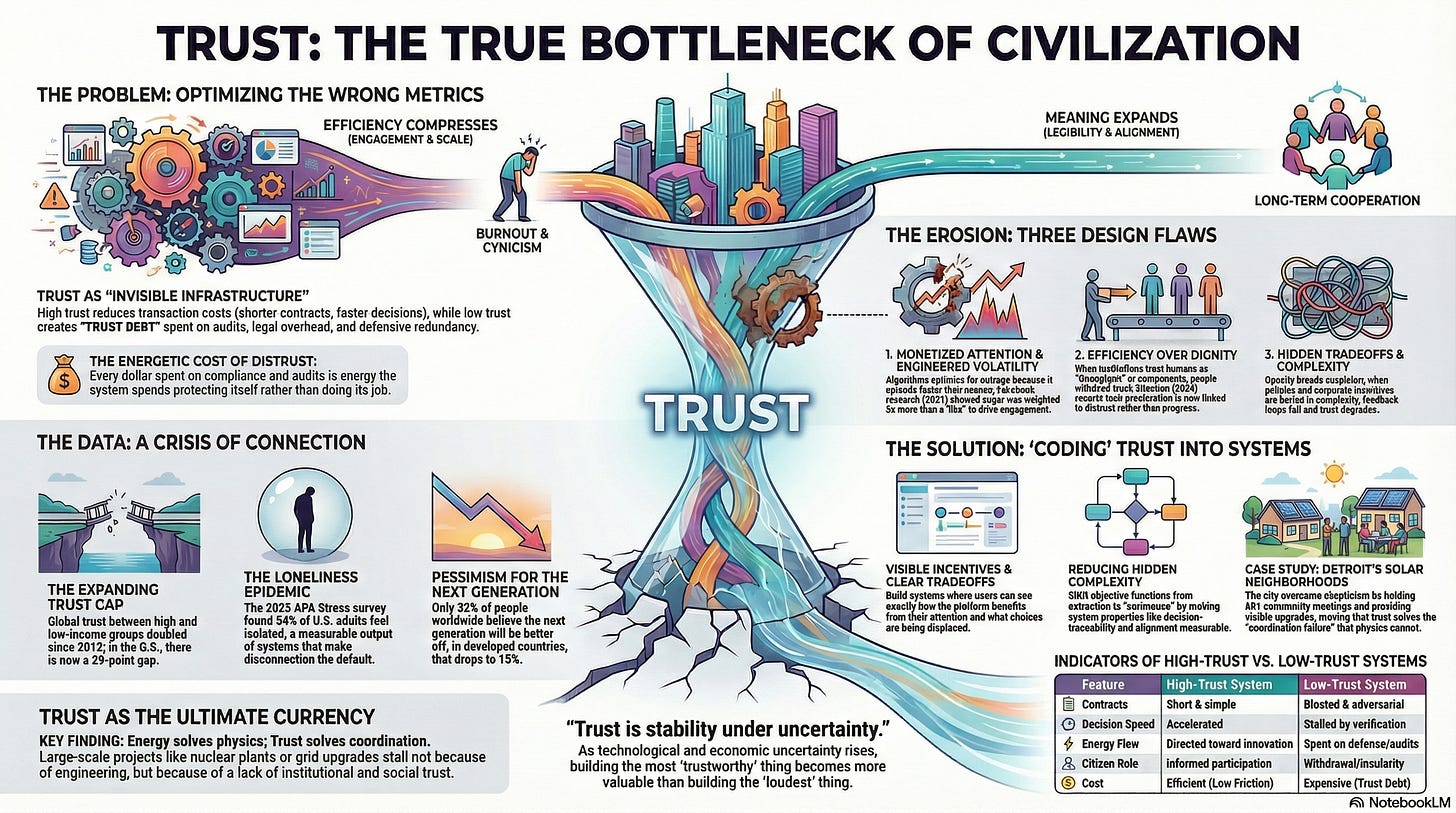

We optimized engagement. Growth. Scale. Efficiency. We did not optimize legibility. Dignity. Long-term alignment. Shared meaning.

Efficiency compresses. Meaning expands.

Efficiency without meaning creates burnout, distrust, isolation, cynicism. We chose efficiency first. Now we’re paying the coordination cost.

Trust Is Invisible Infrastructure

Trust reduces transaction cost.

When trust is high, contracts shrink, decisions accelerate, audits decrease, cognitive load drops, cooperation increases. When trust is low, everything requires verification. Every interaction feels adversarial. Systems grow bloated. People disengage. Isolation becomes rational.

Trust is not emotional fluff. It is structural predictability over time.

My expectation of your behavior aligns with what you actually do. That’s it. And that’s programmable.

Economists have a name for what trust does when it disappears: transaction cost. Every dollar spent on contracts, audits, compliance, legal overhead, and redundancy is a dollar the system spends because it doesn’t trust itself.

Low-trust systems are not just unpleasant. They are energetically inefficient.

Francis Fukuyama documented this at the national level. Countries with high social trust demonstrate measurably lower institutional friction and stronger long-run economic coordination. High-trust societies don’t just feel different. They compound differently.

When institutional trust falls, the overhead rises. More lawyers. More audits. More redundancy. More defensiveness. The system spends more energy protecting itself than doing its job.

We have been building low-trust infrastructure for decades and calling it efficiency.

It is not efficient. It is expensive trust debt.

Why Trust Is Eroding

Three design choices got us here.

1. Attention Is Monetized

Outrage spreads faster than nuance. Conflict holds attention longer than coherence. Platforms optimized for time-on-platform.

We engineered volatility into the nervous system of civilization. Not by accident, but by objective function.

Facebook’s internal research, leaked in 2021, showed the algorithm weighted anger reactions five times more than a like. Posts built to trigger outrage were also more likely to carry misinformation. The objective function and the harm were the same function.

At Twitter, engineers received automated alerts when engagement metrics fell. Reducing conflict, if it reduced engagement, was treated as a bug.

Academic research confirmed what the documents showed. A 2021 study found that platform design amplifies moral outrage online. A 2023 study in Nature Human Behavior found that platforms don’t just reflect division. They manufacture the perception of more division than actually exists.

We didn’t stumble into this. We built it.

2. Institutions Optimized Efficiency Over Dignity

Supply chains optimized cost. Labor optimized throughput. Governance optimized procedural defensibility.

But people don’t experience life as throughput. They experience it as meaning.

When systems treat humans as components, humans withdraw trust. It’s not rebellion. It’s a reasonable response.

The Edelman Trust Barometer has tracked this withdrawal for over 25 years. The 2024 report documented that technological acceleration was now associated with distrust, not progress. The 2026 report went further: grievance has devolved into insularity. Seven in ten people globally now say they are unwilling or hesitant to trust someone who holds different values, uses different information sources, or comes from a different background. The trust gap between high and low-income groups has more than doubled since 2012, from 6 points to 15 globally, and in the U.S. it stands at 29 points. Only 32 percent of people worldwide believe the next generation will be better off. In developed countries, that number drops to 15 percent.

People aren’t just losing trust in institutions. They’re retreating from each other. The mentality, as Edelman put it, has shifted from “we” to “me.”

We made the future feel threatening. And now people are narrowing their worlds to match.

3. Tradeoffs Are Hidden

Complexity obscures consequences. Policies pass without visible second-order effects. Budgets are approved without interactive modeling. Corporate incentives are buried in compensation structures.

Opacity breeds suspicion. Suspicion degrades trust. Trust degradation increases friction. Friction increases cost. Cost increases pressure. Pressure accelerates optimization. Optimization without meaning further erodes trust.

Feedback loop complete.

Robert Putnam documented the trajectory decades before platforms existed. Declining civic participation. Eroding interpersonal trust. Institutions that extract without replenishing. Bowling Alone was published in 2000. The trend he charted had been running since the 1960s. We’re living in the late chapters of that book.

And nothing about platforms or AI changed the direction. They just changed the speed.

The Real Frontier Isn’t Smarter AI

We don’t need bigger models. We need better objective functions.

Right now, most software optimizes engagement, revenue, efficiency, speed. Very little software optimizes legibility, predictability, long-term coherence, incentive transparency.

The next frontier in computing is not just energy-aware architectures. It is trust-aware systems.

This is not a soft argument. It is an engineering one. The same way we learned to measure latency, uptime, and throughput, we can learn to measure trust signals: response consistency, incentive visibility, decision traceability, alignment between stated goals and observed behavior. These aren’t feelings. They’re system properties.

What “Coding Trust” Actually Means

Not branding. Not mission statements. Not moral posturing.

Coding trust means building systems that:

Make incentives visible. If a system benefits from your attention, you should be able to see that.

Expose tradeoffs clearly. Every choice displaces something. Show what.

Quantify second-order effects. Not perfectly. But enough to inform, not obscure.

Reduce hidden complexity. Complexity isn’t the enemy. Hidden complexity is.

Align short-term and long-term reward structures. When these diverge, people feel it, even when they can’t name it.

Trust is not a feeling. It is stability under uncertainty.

As uncertainty rises, technologically, politically, economically, trust becomes more valuable than raw compute. Not as a metaphor. As a structural fact.

A fair question: won’t trust tools themselves get gamed? Won’t a dashboard designed to build trust become another surface for manipulation? Yes. That’s a real risk. These aren’t silver bullets. But a gamed transparency layer is still a measurable improvement over no transparency at all. Opacity guarantees distrust. Legibility at least creates the conditions where manipulation can be caught and corrected. The alternative, continuing to build in the dark, is not safer. It’s just less accountable.

Energy and Trust Are Connected

Energy is physical constraint. Trust is social constraint.

You cannot build stable high-energy systems without trust.

The energy transition requires long-term capital investment, multi-decade coordination, policy continuity, cross-border cooperation. Without trust, projects stall, opposition rises, incentives distort, risk premiums increase.

Consider what it actually takes to build a nuclear plant or a continental-scale grid upgrade. The physics is solved. The engineering is known. What kills these projects is coordination failure: permitting delays, public opposition rooted in historical betrayal, political incentives misaligned with infrastructure timelines, capital that won’t commit to 20-year horizons because the policy landscape shifts every four.

Energy solves physics. Trust solves coordination. Coordination is the harder problem.

This isn’t abstract. In Detroit, the city is converting 165 acres of vacant, blighted land into neighborhood solar arrays under its Solar Neighborhoods Initiative. The project aims to power 127 municipal buildings with clean energy by 2034. But the technical side was never the hard part. The hard part was trust. Residents in these neighborhoods had reason to be skeptical of city promises. So the city held over 60 community meetings, conducted door-to-door outreach, and let neighborhoods apply voluntarily. Homeowners within each solar footprint receive up to $25,000 in energy efficiency upgrades to their homes. Residents helped choose the fencing, the landscaping, the site layouts.

The energy credits flow to city buildings, not directly to residents. That tradeoff is visible, explained, and compensated. And because it is, 19 neighborhoods applied. The program isn’t perfect. But it works because the process was designed to earn trust before asking for participation. That’s constraint redesign in practice, on a real piece of land, in a city that has every reason to distrust institutions.

Governance: The Most Undercoded System

Governance is still largely analog.

Imagine if it operated with interactive budget simulators, real-time policy consequence models, energy-aware cost dashboards, incentive mapping tools visible to citizens.

Not propaganda. Not narrative manipulation. Clear constraint modeling.

Governance today suffers from feedback lag, incentive opacity, and cognitive overload. All three are engineering problems. They are not treated as such.

This isn’t a utopian sketch. Pieces of it already exist. Estonia built a digital governance layer that lets citizens see exactly how their data is used by government. Taiwan’s vTaiwan platform uses open deliberation tools to shape real legislation. Detroit launched its Open Data Portal in 2015, making city operations data freely accessible, a move born partly from the institutional wreckage left by corruption scandals and the largest municipal bankruptcy in American history. The portal didn’t fix trust overnight. But it created a surface where accountability could start to grow.

These aren’t perfect. But they demonstrate something important: when you make the system legible, participation goes up and opposition goes down. Not because people agree more. Because they trust the process more.

The gap between what governance could be and what it currently is might be the single largest trust deficit in modern life.

The Meaning Crisis Is a Systems Failure

We keep diagnosing loneliness, disengagement, and fragmentation as cultural decay.

It is more mechanical than that.

Modern systems reward isolation, spectatorship, algorithmic validation, low-friction outrage. Real engagement is costly. Isolation is cheap. The path of least resistance leads away from other people.

In 2023, the U.S. Surgeon General issued an advisory on loneliness. The 2025 APA Stress in America survey confirmed the trend is still deepening: 54 percent of U.S. adults reported feeling isolated, 50 percent felt left out, and 50 percent said they lacked companionship. Sixty-two percent said societal division itself was a significant source of stress. Among those stressed by division, 61 percent reported feeling isolated, compared to 43 percent among those who weren’t. Nearly seven in ten adults said they needed more emotional support in the past year than they received, up from 65 percent the year before.

This is not a mood. It is a measurable output of systems that made disconnection the default.

People feel the difference between engagement metrics and meaning. Between being optimized for and being respected. That gap is where trust goes to die. The erosion is structural, even if no one designed it on purpose.

Trust as Currency

Energy fuels machines. Trust fuels human networks. Human networks allocate energy.

Therefore: trust determines where energy flows.

Capital flows where trust exists. Collaboration forms where trust exists. Innovation compounds where trust exists.

Distrust redirects energy toward defense. Audits, legal overhead, redundancy, bureaucracy. Every dollar spent on organizational self-protection is a dollar not spent on the thing the organization exists to do.

Low-trust systems are energetically inefficient. Trust is an efficiency multiplier. And unlike compute, you can’t brute-force it. You have to earn it through consistency, transparency, and time.

So What Do We Build?

Not another engagement engine.

Build systems that reduce hidden friction. Clarify tradeoffs. Surface incentive structures. Price energy honestly. Make consequences legible.

The name matters less than the principle: shift the objective function from extraction to coherence.

One concrete example: a city budget that shows, in real time, where each dollar goes and what it displaces. Not a PDF released annually. A live model. Citizens can see the tradeoff between a new road and a school repair. The decision doesn’t change. The dignity of knowing does.

Another: an energy dashboard for a neighborhood that shows not just consumption but cost distribution. Who pays what. Where the subsidies go. What the infrastructure tradeoffs are. Not to generate outrage but to generate informed participation.

Another: a corporate compensation tool that maps executive incentive structures against long-term company health. Not to shame anyone. To make the alignment, or misalignment, visible.

These aren’t fantasies. They’re interface problems. The data already exists. The tradeoffs are already being made. The question is whether we build the layer that makes them legible.

That’s a trust-aware system. It costs less than the distrust it prevents.

The Personal Question

Many people feel insignificant in the face of systemic problems.

That feeling is rational in opaque systems. When tradeoffs are invisible, participation feels meaningless. Trust declines when agency feels symbolic.

The solution is not individual heroism. It is constraint redesign.

You don’t “save civilization.” You modify one surface where friction decreases, incentives align, clarity increases, energy is respected, dignity is preserved.

Small constraint changes compound. The people who built the internet’s trust layer didn’t announce it. They shipped protocols. Nobody held a press conference for TCP/IP. It just worked, and then everything else could work on top of it.

The same is true now. The people who will matter most in the next decade aren’t the ones building the loudest thing. They’re the ones building the most trustworthy thing.

The Thesis

The future will not be won by faster models, bigger GPUs, louder narratives, or more optimized consumption.

It will be stabilized by systems that encode trust, objective functions that value coherence, infrastructure that respects energy, governance that exposes tradeoffs, and technology that reduces cognitive overload instead of amplifying it.

Energy is the physics. Trust is the currency.

Without trust, energy amplifies instability. With trust, energy amplifies coordination.

Coordination is civilization’s true operating system.

And right now, that operating system needs a patch.

Justin Shank writes about systems, trust, and the gap between what we build and what we actually need.

Sources: 2026 Edelman Trust Barometer (edelman.com); 2024 Edelman Trust Barometer (edelman.com); WSJ Facebook Files / Frances Haugen testimony, 2021; Nieman Journalism Lab, Oct 2021; MIT Technology Review, Oct 2021; PMC8363141, 2021; Nature Human Behavior 7:917–927, 2023; Putnam, Bowling Alone, 2000; APA Stress in America 2025: A Crisis of Connection; U.S. Surgeon General Advisory on Loneliness, 2023; City of Detroit Solar Neighborhoods Initiative (detroitmi.gov); City of Detroit Open Data Portal (data.detroitmi.gov).ling Alone, 2000.