The Grid Is the New Data Center

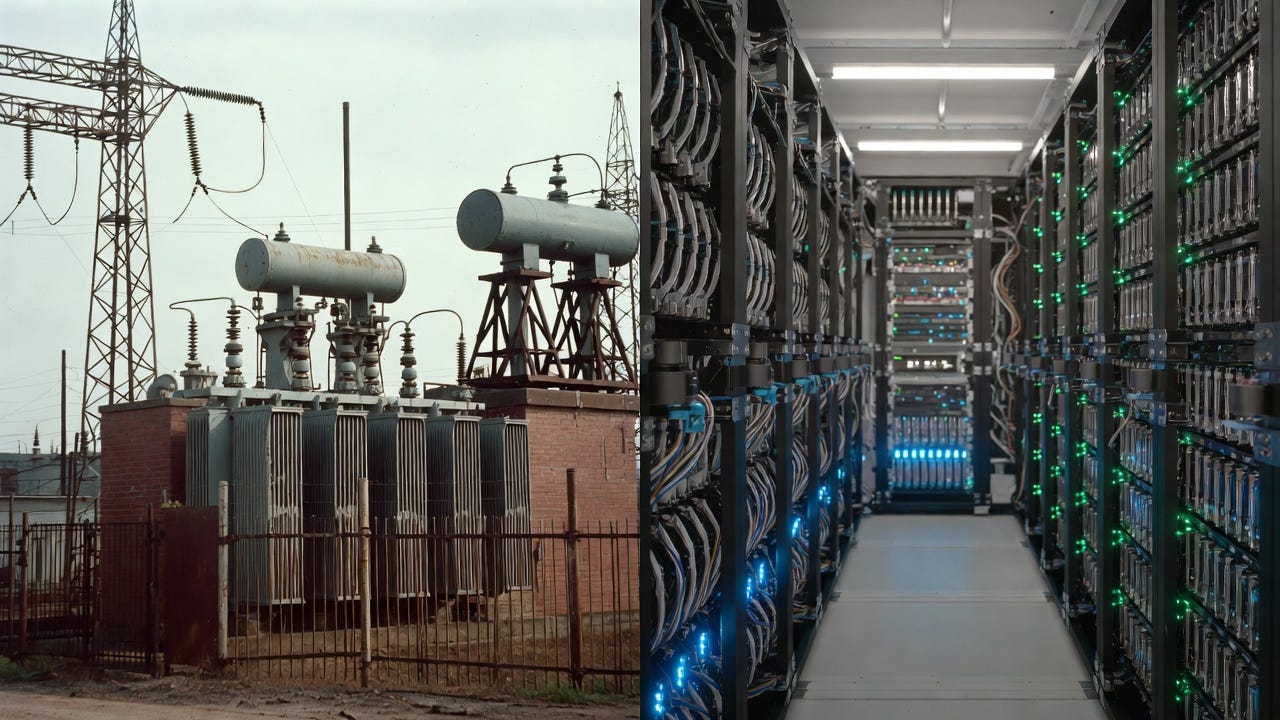

The real bottleneck for AI isn't compute. It's electrons.

In 1979, Unit 1 of the Three Mile Island nuclear plant was shut down after its neighbor suffered a partial meltdown. It sat dormant for 45 years. In 2027, it will come back online, not to power a city, but to power a data center. Microsoft signed a 20-year deal to take the entire output.

That sentence is the whole argument. We crossed a threshold somewhere in the last few years, and most people haven’t noticed it yet. The constraint on artificial intelligence is no longer the quality of the models, or the speed of the chips, or the number of engineers who can build them. It’s the physical flow of electrons. It’s megawatts. It’s grid access and cooling water and transmission lines and permitting delays that average four years before a single shovel breaks ground.

The grid is the new data center. And whoever controls the joules controls the future of intelligence.

“AI’s next bottleneck is not better models but joules: who can secure them, where, and under what rules.”

The Scale Is Hard to Comprehend

Training a single large language model consumes on the order of tens of thousands of megawatt-hours, comparable to the annual electricity use of thousands of U.S. households. And that’s just training once. Inference, running the model across millions of users and billions of queries every day, can match or exceed that over a model’s lifetime.

In the U.S., companies trying to connect new data centers to the grid wait a median of five years in interconnection queues, with some regions averaging eight. There are currently over 2,600 gigawatts of proposed projects sitting in those queues. Most won’t survive the wait.

Cooling compounds it. Dense GPU clusters run hot. Very hot. Keeping them at operating temperature requires massive water or air systems that frequently collide with local resources. In Arizona, data centers are competing with agriculture for groundwater in a desert state already under water stress. In colder climates, ambient air provides a free edge, which is partly why Sweden and Finland are seeing AI labs appear amid fjords and permafrost.

Efficiency gains offer some relief. Smaller models, sparsity, quantization, and specialized chips can reduce watts per inference. That buys time. It doesn’t change the underlying equation: as long as the industry continues scaling, the grid remains the decisive constraint.

Where Compute Actually Lives

Energy availability isn’t uniform, so neither is AI growth. Cheap, reliable power pulls compute infrastructure the way gravity pulls water, reshaping where innovation happens. This “cognitive geography” follows physics and policy far more than it follows talent pools.

Virginia’s data center boom is the clearest example. Proximity to undersea cables and a robust power grid turned Loudoun County into “Data Center Alley,” now hosting somewhere between 13 and 22% of global data center capacity. Low latency and power redundancy attract AI workloads, which creates a self-reinforcing loop: jobs and talent follow investment, which draws more investment.

Texas stands out for deregulation and speed. Its independent grid (ERCOT) allows faster hookups, and abundant natural gas keeps baseline costs down. In 2025 alone, data center interconnection requests in ERCOT surged nearly 300%, pushing the large-load queue above 226 gigawatts, more than 70% of it from data centers. Wind and solar add intermittency, but battery storage is bridging the gaps.

“Where electrons flow freely, cognition scales.”

The pattern extends globally. In China, Sichuan’s hydropower draws data centers and training runs. In the EU, sustainability reporting rules are starting to influence where facilities get built. Middle Eastern sovereign funds, having spent a century extracting oil from the ground, are now pivoting to compute. The UAE is building hyperscale facilities that blend petrodollar capital with silicon at a scale that would have been unimaginable five years ago.

What this adds up to is a recentralization of tech around energy hubs, inverting the “distributed knowledge economy” narrative that defined the internet era. And concentration brings its own risk. When AI clusters in a handful of energy-rich geographies, a single grid failure, an extreme weather event, or a policy shift can disrupt a disproportionate share of global capacity. ERCOT’s 2021 freeze was a preview. Resilience and geographic diversity are now infrastructure priorities, not just raw capacity.

1970s Scaffolding, 2020s Acceleration

Forget Moore’s Law as the pace-setter. Regulatory hurdles are now throttling AI progress at least as much as hardware limits.

Model architectures evolve fast. Building the power backbone to run them hits walls constructed decades ago. Environmental reviews under NEPA can drag on for years; the average timeline for transmission project review alone is 4.3 years, scrutinizing everything from wildlife impacts to visual aesthetics. Nuclear licensing, still governed largely by 1950s-era frameworks, stifles small modular reactors that could provide clean, dense power. Approvals for cross-state transmission lines routinely exceed a decade, slowed by NIMBY opposition, legal challenges, and jurisdictional complexity.

Local zoning adds more friction. Communities resist data centers over noise, visual impact, and land use, as seen in recent battles in Oregon and Georgia. Utility rate cases, where regulators set prices, spark additional debates over who pays for grid upgrades: ratepayers who didn’t ask for a hyperscale neighbor, or the companies benefiting from it.

“This 1970s scaffolding clashes with 2020s acceleration.”

AI firms are lobbying hard for reform. The inertia is real. Delays mean lost opportunities in a field where first-mover advantages compound year over year. The tension doesn’t require exaggeration. It’s a straightforward collision between the speed of innovation and the pace of governance.

The Sharper Question Nobody Is Asking

Here is where the conversation about AI and energy usually stops. Infrastructure. Permitting. Policy. Technical bottlenecks.

But there is a harder question underneath all of it.

It helps to distinguish two kinds of efficiency. Engineering efficiency asks: how do we get more compute per joule? Better chips, leaner models, smarter architecture. That’s the conversation everyone is having. Allocative efficiency asks something different: should we power this use of AI at all?

Megawatts aren’t neutral. They power specific pursuits.

“Megawatts aren’t neutral. They power specific pursuits.”

In a constrained grid, every megawatt directed to a large language model serving entertainment queries is a megawatt not directed somewhere else. Consumer chatbots compete with climate modeling. Financial trading algorithms compete with drug discovery. Defense surveillance systems compete with open scientific research. In most cases, utilities and governments make these allocation decisions via processes that are opaque, incremental, and rarely framed as value judgments at all.

Surplus power in one region funds open research. Shortages elsewhere funnel capacity to high-margin enterprise. Over time, these choices embed values into AI’s fabric. Not through any dramatic decision, but through thousands of quiet infrastructure deals, zoning approvals, and rate cases.

This isn’t about machines rising up. It’s about human decisions rippling through scaled intelligence. The question of who controls the watts is the question of what kind of AI gets built, for whom, and at whose expense.

What Actually Moves the Needle

If energy bottlenecks AI, targeted policy can expand the pipe. These aren’t silver bullets, but practical levers with active political momentum:

Streamlined transmission approval. Fast-tracking interstate lines via federal overrides could cut approval timelines from years to months. Recent bipartisan bills have moved in this direction; implementation remains the challenge.

Nuclear reform. Modernizing licensing for small modular reactors, reducing barriers while maintaining safety standards, could unlock dense clean power. Wyoming and several other states have active pilot programs. The regulatory framework is beginning to move.

Grid-scale storage incentives. Tax credits for batteries and pumped hydro smooth renewable intermittency and make AI workloads more reliable without requiring new baseload plants.

Regional interconnection upgrades. Investing in high-voltage direct current (HVDC) lines to link isolated grids, including connecting ERCOT to the national system, would boost redundancy and unlock stranded capacity.

Carbon and emissions signals. Carbon pricing or clean-energy mandates that make siting in coal-heavy regions costly would steer build-out toward cleaner energy sources, tying the AI energy question directly to climate policy rather than treating them as separate problems.

Transparent allocation reporting. Requiring utilities to disclose data center deals and large-load agreements would create accountability and allow public scrutiny of decisions that are currently made with no public visibility.

The throughline: when intelligence was scarce, education policy shaped who had cognitive power. When energy is scarce, infrastructure policy does.

The Stakes Are Not Distant

Current projections from the International Energy Agency show global data center electricity demand reaching 945 terawatt-hours by 2030, roughly equivalent to Japan’s total current consumption. The U.S. drives a large share of that. Data centers are already approaching 3 to 4 percent of national electricity consumption and climbing.

Interconnect and permitting delays are already pushing some capacity toward jurisdictions with looser constraints and less scrutiny. That dynamic accelerates if domestic reform stalls.

The outcome isn’t foreordained. It depends on whether policy widens the pipe, how much transparency exists around allocation decisions, and whether the public understands that AI’s expansion is fundamentally an energy story as much as a technology one.

“Who gets megawatts today will shape which kinds of intelligence get scaled tomorrow.”

The grid isn’t just the new data center. It’s the new policy frontier for AI. And unlike the algorithmic choices buried inside model training runs, infrastructure decisions are visible, physical, and politically contestable.

The electrons are the argument.

References:

IEA “Energy and AI” report (2025)

Microsoft/Three Mile Island restart, Reuters (2025)

ERCOT large-load queue data, ERCOT board presentation (Dec 2025)

NEPA transmission review timelines, Clean Air Task Force (2024)

U.S. data center demand projections, WRI / Goldman Sachs Research (2025)

Virginia data center capacity, Cardinal News / TeleGeography analysis (2025)

Arizona water use, Grist / Ceres (2026)