Bio-Digital Convergence

The New Architecture of Synthetic Intelligence Nodes

TL;DR

The data center of the future won’t just hum; it will breathe.Vascularized brain organoids and neuromorphic chips are converging right now into hybrid “synthetic intelligence nodes”. Living computational systems that metabolize energy, self-organize, adapt in real time, and run on roughly 20 watts instead of megawatts. The world’s first commercial biological computer is already shipping in 2026.

This is no longer theory. It’s hardware. And it collapses the line between computer and organism, forcing new questions about infrastructure, ethics, and what it actually means for something to be alive.

Imagine walking into a data center and hearing something unexpected: not the steady white noise of cooling fans, but the faint, rhythmic pulse of perfusion systems.

Rows of racks circulate nutrient fluid through branching tubes that look uncannily vascular.

Overheating isn’t a thermal anomaly here.

It is tissue death.

A system crash isn’t data corruption.

It is cellular necrosis.

This is not science fiction.

It is the early reality of bio-digital convergence. The fusion of living, vascularized neural organoids with neuromorphic silicon hardware. Two powerful technological curves have finally met: one grown from stem cells in bioreactors, the other etched in silicon at billion-neuron scale. The result is a genuinely new kind of computational node. Neither purely biological nor purely digital, but something that metabolizes, learns, and fails in ways that have no precedent in computing history.

In this essay I lay out exactly why this convergence is accelerating in 2026, the technical breakthroughs that made it possible, the infrastructure and energy shifts already underway, the commercial products now on the market, and most importantly, the ethical architecture we must build in parallel before the industry scales.

The systems we are about to deploy will, in a very literal sense, breathe.

The only remaining question is whether we design that future with the foresight and restraint it demands.

The Data Center That Breathes

Imagine walking into a data center and hearing something unexpected: not just the white noise of cooling fans, but the faint pulse of perfusion systems. Rows of racks circulate nutrient fluid through branching tubes that look uncannily vascular. Overheating isn’t a thermal anomaly here. It is tissue death. A system crash isn’t data corruption. It’s cellular necrosis.

This is not a scene from science fiction. It is where two convergent curves in technology are pointing.

Over the past several years, laboratory-grown neural organoids have crossed a significant threshold. They can now be vascularized, metabolically sustained, and coaxed into producing structured oscillatory network activity. At the same time, neuromorphic processors, hardware built to emulate biological spiking neurons rather than binary transistor logic, have scaled into the billions of simulated neurons per chip. These are not parallel stories. They are the same story, told from opposite ends of the carbon-silicon divide.

Bio-digital convergence is the term for what happens when those ends meet: the fusion of living neural substrates with synthetic computational architectures. It replaces clock-driven determinism with event-based, adaptive processing. It trades binary switching for probabilistic firing. And it begins to collapse the distinction between a computer and an organism in ways that existing vocabulary struggles to handle.

The implications extend well beyond engineering efficiency. They touch infrastructure, ethics, sovereignty, and eventually the question of what it means for something to be alive.

The Technical Foundations

To understand why this convergence is happening now, it helps to appreciate how different biological and digital computation actually are, and why that difference is increasingly seen as an opportunity rather than an obstacle.

Traditional computing is deterministic. It operates through discrete binary states, 0 or 1, processed at extreme frequencies in synchronized clock cycles. The architecture is powerful and predictable, but it is also metabolically profligate. A GPU cluster training a large AI model can consume hundreds of kilowatts [1]. A warehouse-scale data center draws megawatts continuously [2]. The human brain, meanwhile, performs remarkably sophisticated inference on roughly 20 watts, less than a dim light bulb [3].

Biological neural systems achieve this efficiency through a fundamentally different logic. They are probabilistic rather than deterministic. They are event-driven: neurons fire only when input thresholds are reached, not on every clock cycle. They self-organize, forming and pruning connections based on experience. And crucially, they change physically. Synaptic weights shift, new connections form, unused pathways atrophy. The brain doesn’t just run software. It rewrites its own hardware continuously.

A synthetic intelligence node, as researchers now envision it, would attempt to combine the strengths of both paradigms. At its core would be a living neural cluster, an organoid or structured neural tissue, interfaced with a synthetic substrate of electrode arrays, photonic connections, and microfluidic scaffolding. A neuromorphic digital bridge would translate the biological system’s spike patterns into machine-readable signals and back again. A metabolic stabilization system would handle oxygenation, nutrient delivery, and waste removal. The result would be neither purely biological nor purely digital, but something genuinely new: a computational node that metabolizes energy, self-organizes, and adapts in real time.

The engineering challenges in realizing this are formidable. Achieving reliable bidirectional signaling between electrodes and living neurons, what researchers call hybrid synapse stabilization, remains difficult. Living tissue tolerates far narrower temperature ranges than silicon. Signal interpretation is complicated by the inherent noise and plasticity of biological firing. And until recently, the most fundamental problem was simply keeping neural clusters alive long enough to be useful.

Vascularization: The Breakthrough That Changed the Trajectory

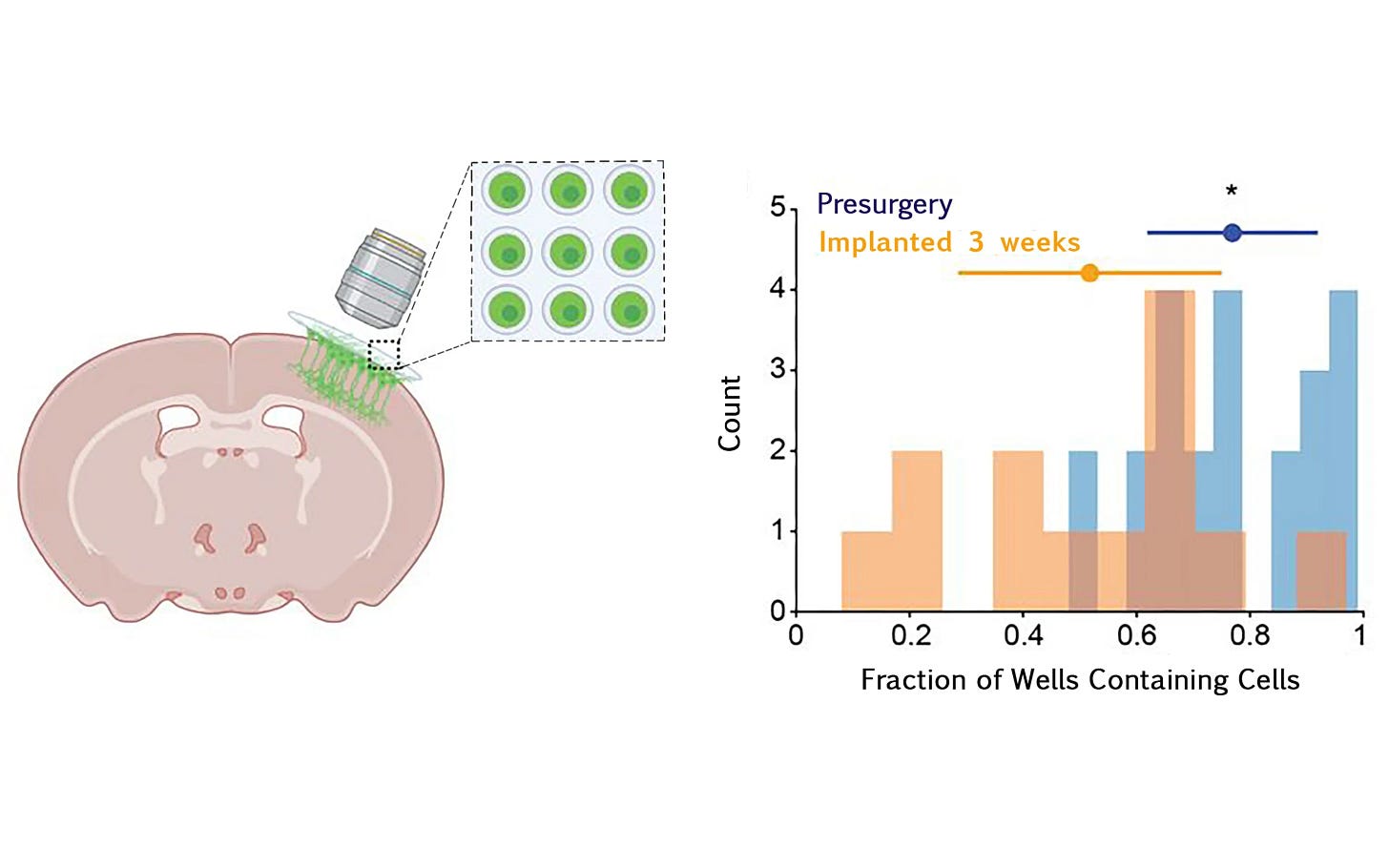

Organoids, three-dimensional clusters of neurons grown from stem cells, have existed in laboratories for years. But they faced a hard ceiling: without vasculature, oxygen and nutrients could only diffuse a few hundred micrometers into the tissue before being exhausted. Larger, more capable organoids would simply die at their core.

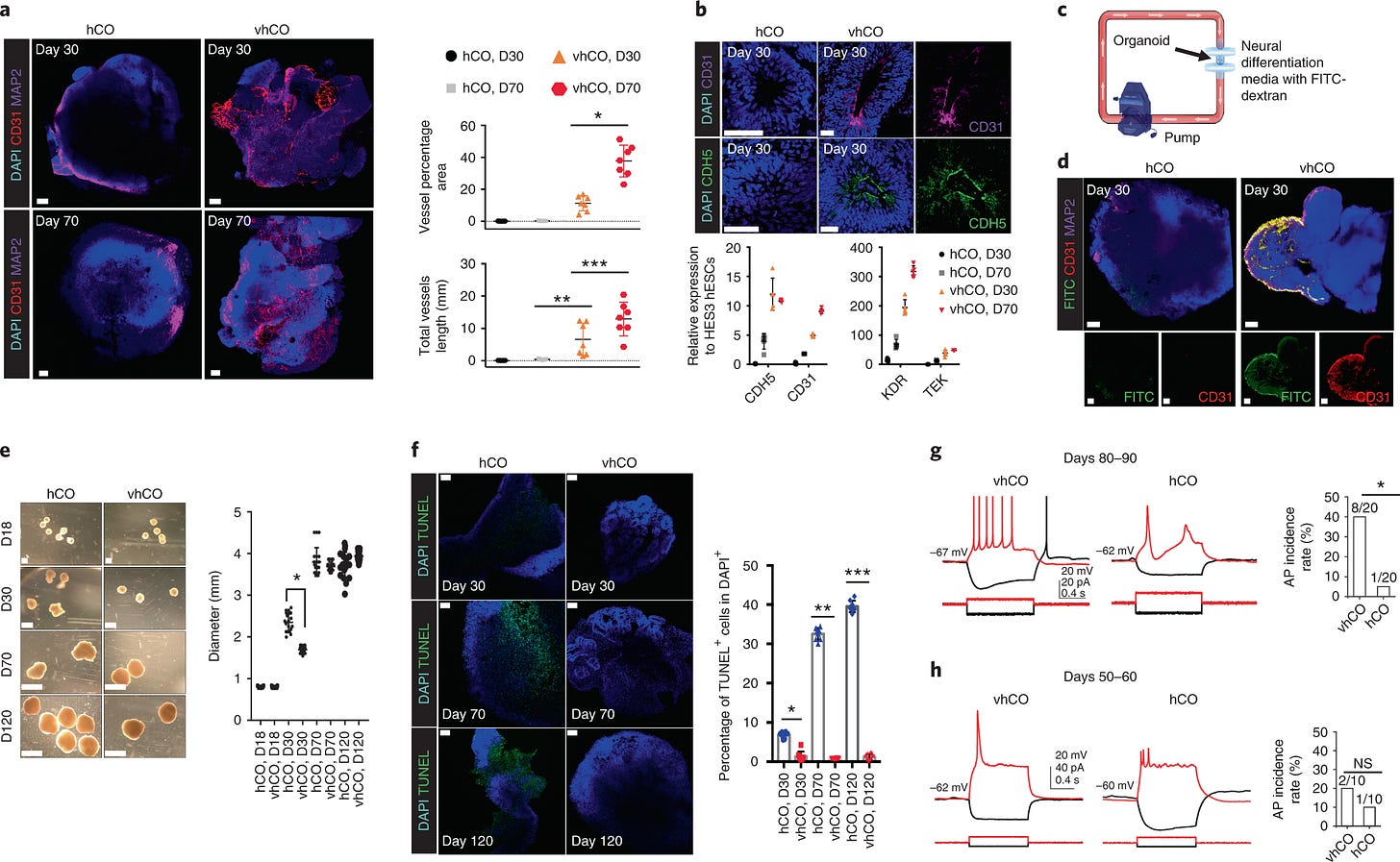

Recent advances have changed this. In July 2025, a team led by Annie Kathuria at Johns Hopkins University’s Department of Biomedical Engineering published results in Advanced Science describing a multi-region brain organoid (MRBO) that integrates cerebral, mid-hindbrain, and endothelial systems into a single structure, complete with rudimentary blood vessels and early blood-brain barrier formation [4]. The system captured broad cellular diversity found in early human fetal brain tissue, and after 60 days in culture, distinct brain regions retained their identities and began producing coordinated electrical activity as an interconnected network. Improved microfluidic scaffolds now simulate the oxygen gradients and flow dynamics of actual blood vessels, and multi-organ integration models have pushed metabolic fidelity further still. These aren’t incremental refinements. They remove the barrier that previously made organoid-based computing a theoretical curiosity rather than a practical direction.

Neuromorphic Hardware: The Digital Half of the Bridge

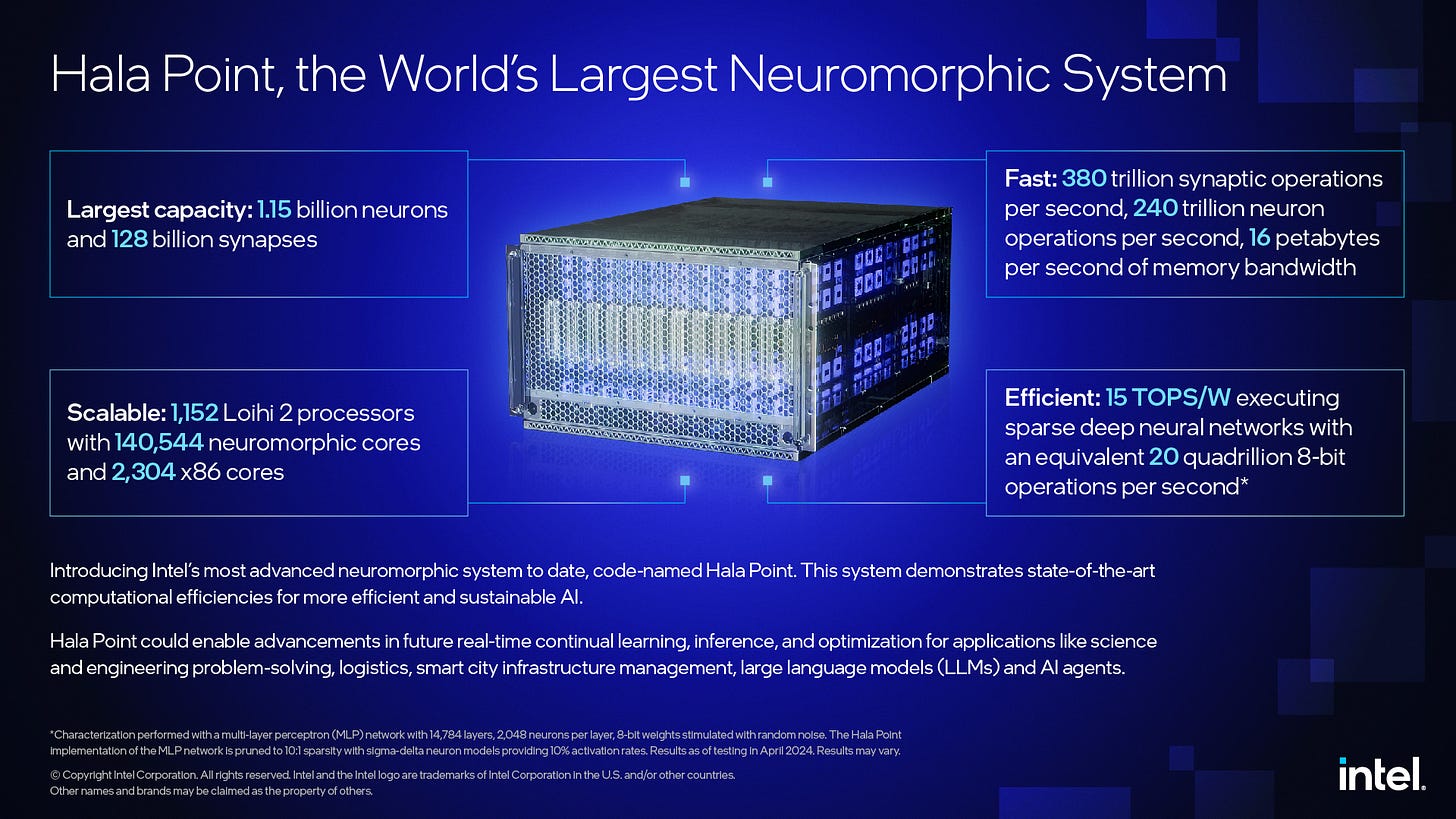

On the synthetic side, neuromorphic computing has been scaling rapidly. Unlike conventional processors, neuromorphic chips emulate spiking neural networks, responding to events rather than processing in synchronized cycles. Intel’s Hala Point system, deployed at Sandia National Laboratories, integrates roughly 1.15 billion simulated neurons across 1,152 Loihi 2 processors in a chassis roughly the size of a microwave oven [5]. BrainChip’s Akida architecture (AKD1500) targets low-power edge applications, achieving 800 GOPS while consuming minimal power [6]. SpiNNaker 2, developed jointly by TU Dresden and the University of Manchester, is designed for large-scale brain simulation and neuromorphic AI research [7]. Each of these systems processes information in ways that are structurally more similar to biological neurons than to traditional CPUs.

It is worth being precise about where neuromorphic hardware actually stands, because overstatement in this area is common. Intel’s neuromorphic research team has been direct: Hala Point is not a general-purpose AI accelerator, and the field does not yet have a neuromorphic equivalent of the transformer architecture [5]. Gartner’s 2025 analysis of neuromorphic computing places the technology still several years from broad market impact [8]. This matters not as a reason for skepticism about the long-term trajectory, but as a reason for honesty about the timeline. Neuromorphic hardware is a research platform maturing toward deployment, not a deployed alternative to GPUs.

The energy profile is striking. Where a large AI data center consumes megawatts and a GPU training cluster hundreds of kilowatts, a neuromorphic chip operates on watts [1, 2]. This isn’t just incremental improvement. It represents a different computational philosophy, one organized around the principle that you should only compute when there’s something worth computing about.

The hypothesis driving convergence is that these two trajectories, biological efficiency and synthetic scalability, can be combined. In the most promising hybrid approach, called reservoir computing, a dynamic biological network acts as a complex signal transformer. The living neural cluster processes inputs through its natural, self-organized dynamics. The synthetic system only needs to train the output layer, dramatically reducing the computational burden. The biology does what biology is good at; the silicon does what silicon is good at.

Infrastructure at the Crossroads

Data centers currently consume approximately 1.4% of global electricity, a figure that AI expansion is pushing steadily upward, with IEA projections suggesting consumption could exceed 1,000 TWh annually by 2026 [2]. If hybrid nodes achieve practical viability, even in limited, specialized configurations, the energy arithmetic of computation begins to change.

This shift would also reshape the workforce and supply chains around computing. The skills required to maintain a living neural substrate are not the skills of a data center technician. The facilities required to grow and stabilize organoids are not server farms. An entirely new category of biotech-compute infrastructure would need to be built, staffed, and regulated, and the regulatory frameworks for that category do not yet exist.

At the edge, the implications may be even more immediate. The Internet of Bio-Nano Things, a concept in which biological components are integrated into distributed sensor networks [9], becomes considerably more plausible when the computational nodes themselves are bio-digital. Adaptive implantable health monitors, real-time neuro-prosthetics, and autonomous systems that learn from their environment without requiring cloud retraining are all downstream possibilities.

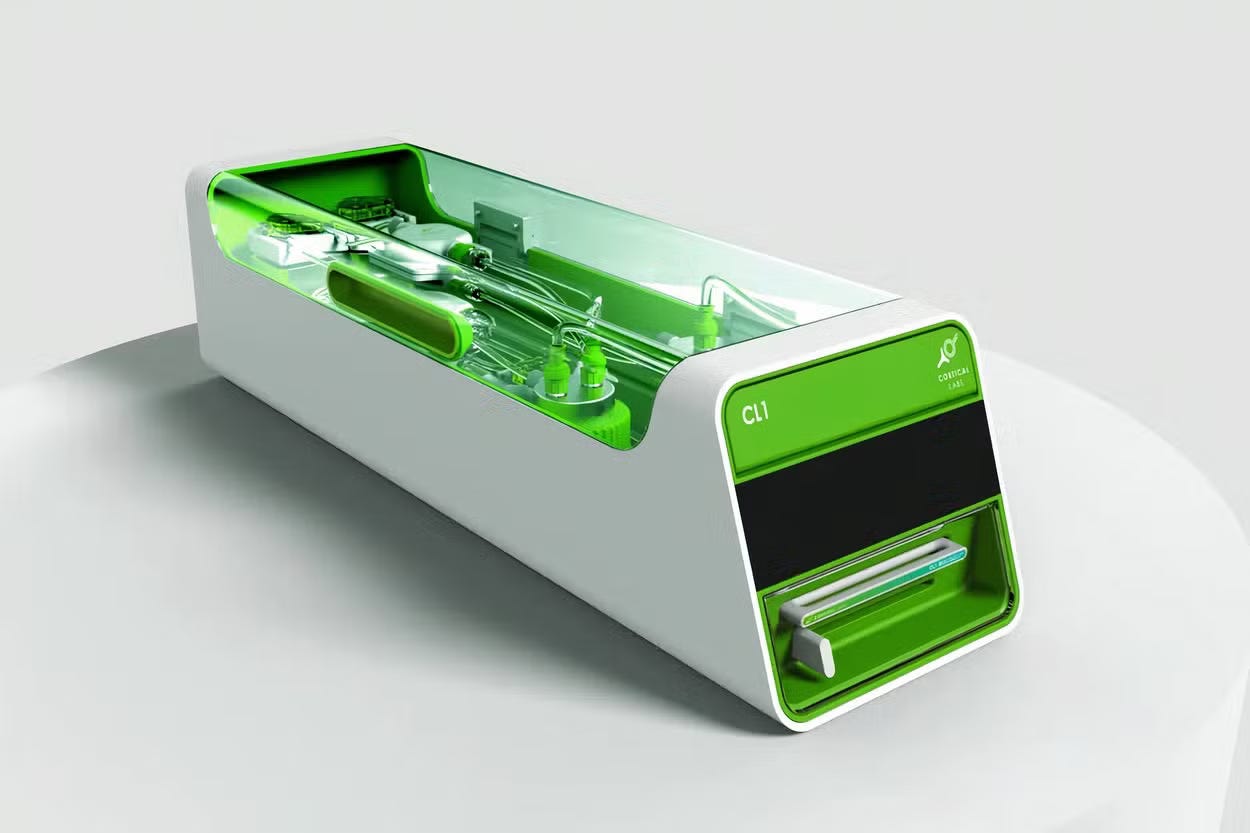

Commercial interest has crossed from research into the market in at least one significant case. In March 2025, Australian startup Cortical Labs launched the CL1 at Mobile World Congress: what the company describes as the world’s first commercial biological computer, combining approximately 800,000 lab-grown human neurons cultivated from stem cells with a silicon planar electrode array [10]. Each unit is priced at around $35,000 and houses its neural tissue in a closed life-support system, pumps, temperature regulation, nutrient delivery, waste filtration, that keeps cells viable for up to six months [10]. The company also offers cloud access through a “Wetware-as-a-Service” model [10]. The CL1 is not yet a general-purpose computing platform; current applications center on drug discovery, disease modeling, and neuroscience research. But the transition from laboratory demonstration to commercial product with a catalog number and a price tag is not a minor development.

The challenge any honest accounting must address: living nodes fail differently than digital ones. A crashed server can be rebooted. A necrotic neural cluster cannot. Stability requirements for bio-digital infrastructure are not just technically demanding. They introduce a category of failure mode that has no precedent in the history of computing.

The Ethics of Embedded Life

If a neural organoid learns, adapts, and responds to stimuli, if it exhibits something that looks behaviorally like experience, what moral status does it hold? This is not a question that can be dismissed as premature. The history of ethics applied to technology is overwhelmingly a history of being too late: frameworks developed after exploitation is already underway, after harm has already been institutionalized.

The concern isn’t abstract. Human-derived biological tissue would form the substrate of these systems. The sourcing of that tissue raises questions of consent, equity, and ownership. If the cognitive properties of a hybrid system emerge from the biological component, who owns that cognition? If the system exhibits what researchers cautiously call “sentience-adjacent” properties, self-preservation behavior, adaptive learning, responsiveness to distress signals, at what point does it become something other than property?

The phrase “commodification of living intelligence” may sound overwrought, but it captures a genuine risk: that we build an industry around using living matter as computational substrate before we have any shared framework for what obligations that use creates. The precedent of human cell lines commercialized without donor knowledge or consent, most famously the HeLa cells derived from Henrietta Lacks, illustrates that this risk is not hypothetical [11]. The pattern is consistent: biological material becomes commercially valuable before ethical frameworks for its use exist. By the time the frameworks arrive, the industry has already formed around their absence.

The philosophical dimension is no simpler, and it requires engaging seriously with what we actually know, and don’t know, about consciousness.

David Chalmers’ formulation of the “hard problem” remains the most precise statement of the difficulty: explaining why any physical process gives rise to subjective experience at all is categorically different from explaining how the brain performs its functional tasks [12]. We can, in principle, explain how a system processes information, integrates signals, and produces outputs. Explaining why there is something it is like to be that system is a different question entirely, and it has no consensus answer.

Giulio Tononi’s Integrated Information Theory (IIT) proposes that consciousness is a property of systems with high degrees of integrated information, not a threshold unique to biological brains, but a continuum present wherever information is integrated in ways that cannot be decomposed into independent parts [13]. If IIT is correct, sufficiently complex organoid networks could in principle possess some degree of conscious experience, regardless of their substrate. This is not a fringe position. It is a serious scientific hypothesis with active empirical research programs.

Stanislas Dehaene’s global workspace theory takes a different approach: consciousness arises when information is broadcast widely enough across neural networks to become globally available for report, reasoning, and behavioral control [14]. By this account, the relevant question for bio-digital systems isn’t structural complexity but functional integration, whether information becomes globally available across the hybrid system’s architecture.

Neither theory resolves the organoid question. What they do is make clear that the question cannot be set aside by asserting that organoids are “just tissue.” The same dismissal would rule out large swaths of animal consciousness, which most thoughtful people now reject. As organoids develop greater functional complexity and longer operational lifespans, the moral status question will become empirically tractable. That means we will need ethical frameworks before we have definitive answers, not after.

The commodification risk is most acute in this gap. An industry built on biological computation before moral status frameworks exist will optimize around whatever is profitable. The precedent of HeLa cells suggests those optimizations will be made without the knowledge or consent of the people whose biology made them possible.

Autonomy and privacy present a related but distinct set of concerns. Bio-digital interfaces could enhance human cognition and enable adaptive prosthetics. They could also enable forms of cognitive surveillance with no historical analog, monitoring not just behavior but the neural correlates of thought itself. The collapse of the boundary between biological cognition and digital computation is, among other things, a collapse of the boundary between mind and data.

Policy frameworks lag dramatically behind technical capability, not by months, but by years. What will be needed, at minimum: biocompute rights frameworks, strict sourcing and consent regulations, transparency mandates for hybrid system development, and standing ethical review bodies with genuine authority over commercial deployment. These are not optional considerations. They are prerequisites for building this technology in a way that doesn’t replicate the mistakes of every previous biological commercialization cycle.

Stabilize Before Scaling

The argument of this piece is not that bio-digital convergence is good or bad, inevitable or avoidable. It is that the convergence is real, driven by genuine technical progress in organoid maturation and neuromorphic hardware, and that the architectural mutation it represents, embedding metabolism into computation, raises questions that pure engineering cannot answer.

What is not determined is the ethical architecture of what gets built. Whether hybrid intelligence nodes are designed with their potential moral status in mind from the beginning, or whether that question is retrofitted after the industry has already formed. Whether sourcing of biological material is governed by consent and equity, or by whatever is cheapest. Whether cognitive surveillance becomes a feature or is prohibited as a category.

The future of computation will not be purely digital. The systems we build will, in some meaningful sense, breathe. The question is whether we design that future with the restraint and foresight it requires, or whether we arrive at its implications the way we usually arrive at the implications of powerful technologies: surprised, underprepared, and already trying to contain the consequences.

What do you think?

The systems we are building will, in a very literal sense, breathe.

They will metabolize energy, self-organize, adapt in real time, and when they fail; experience cellular necrosis rather than a simple reboot.

So here is the question we can no longer treat as premature:

If a neural organoid learns, adapts, and produces coordinated, experience-like activity, at what point does it stop being “property” and start demanding moral consideration?

I’m genuinely curious where you draw that line, and why.

Drop your thoughts in the comments below. I read every single one.

If this piece shifted how you see the future of computation, please share it. The ethical architecture we design now will shape everything that comes next.

And if you want more deep dives at the intersection of emerging technology, biology, and ethics, consider becoming a paid subscriber; new essays like this drop regularly.

Thank you for reading all the way through.

— Shank

References

Lawrence Berkeley National Laboratory. United States Data Center Energy Usage Report. 2016.

International Energy Agency. Electricity 2024. IEA, 2024. Available: https://www.iea.org/reports/electricity-2024

Sengupta, B., Stemmler, M.B., & Friston, K.J. “Information and Efficiency in the Nervous System.” PLOS Computational Biology, 2013. See also: Attwell, D. & Laughlin, S.B. “An Energy Budget for Signaling in the Grey Matter of the Brain.” Journal of Cerebral Blood Flow & Metabolism, 21(10), 2001. DOI: 10.1097/00004647-200110000-00001

Kathuria, A. et al. “Multi-Region Brain Organoids Integrating Cerebral, Mid-Hindbrain, and Endothelial Systems.” Advanced Science, July 2025. DOI: 10.1002/advs.202503768

Intel Corporation. “Intel Builds World’s Largest Neuromorphic System.” Intel Newsroom, 2024. Available: https://newsroom.intel.com/artificial-intelligence/intel-builds-worlds-largest-neuromorphic-system-to-enable-more-sustainable-ai. See also: Sandia National Laboratories. “1.15 Billion Artificial Neurons Arrive at Sandia.” Available: https://newsreleases.sandia.gov/artificial_neuron/

BrainChip Holdings. AKD1500 Edge AI Co-Processor: Product Brief. October 2025. Available: https://brainchip.com/wp-content/uploads/2025/10/AKD1500-Product-Brief-V2.4-Oct.25.pdf

Mayr, C. et al. “SpiNNaker 2: A 10 Million Core Processor System for Brain Simulation and Machine Learning.” arXiv:1911.02385, 2019.

Gartner. Emerging Technologies: Tech Innovators in Neuromorphic Computing. June 2025. Available: https://www.gartner.com/en/documents/6579302

Akyildiz, I.F. & Jornet, J.M. “The Internet of Bio-Nano Things.” IEEE Communications Magazine, 53(3), 2015. DOI: 10.1109/MCOM.2015.7060516

Cortical Labs. CL1 product page and MWC 2025 launch. Available: https://corticallabs.com/cl1

Skloot, R. The Immortal Life of Henrietta Lacks. Crown Publishers, 2010.

Chalmers, D.J. “Facing Up to the Problem of Consciousness.” Journal of Consciousness Studies, 2(3):200-219, 1995. Available: https://consc.net/papers/facing.pdf

Tononi, G. “An Information Integration Theory of Consciousness.” BMC Neuroscience, 5:42, 2004. DOI: 10.1186/1471-2202-5-42. See also: Tononi, G. “Consciousness as Integrated Information: A Provisional Manifesto.” Biological Bulletin, 215(3), 2008.

Dehaene, S. Consciousness and the Brain: Deciphering How the Brain Codes Our Thoughts. Viking/Penguin, 2014.