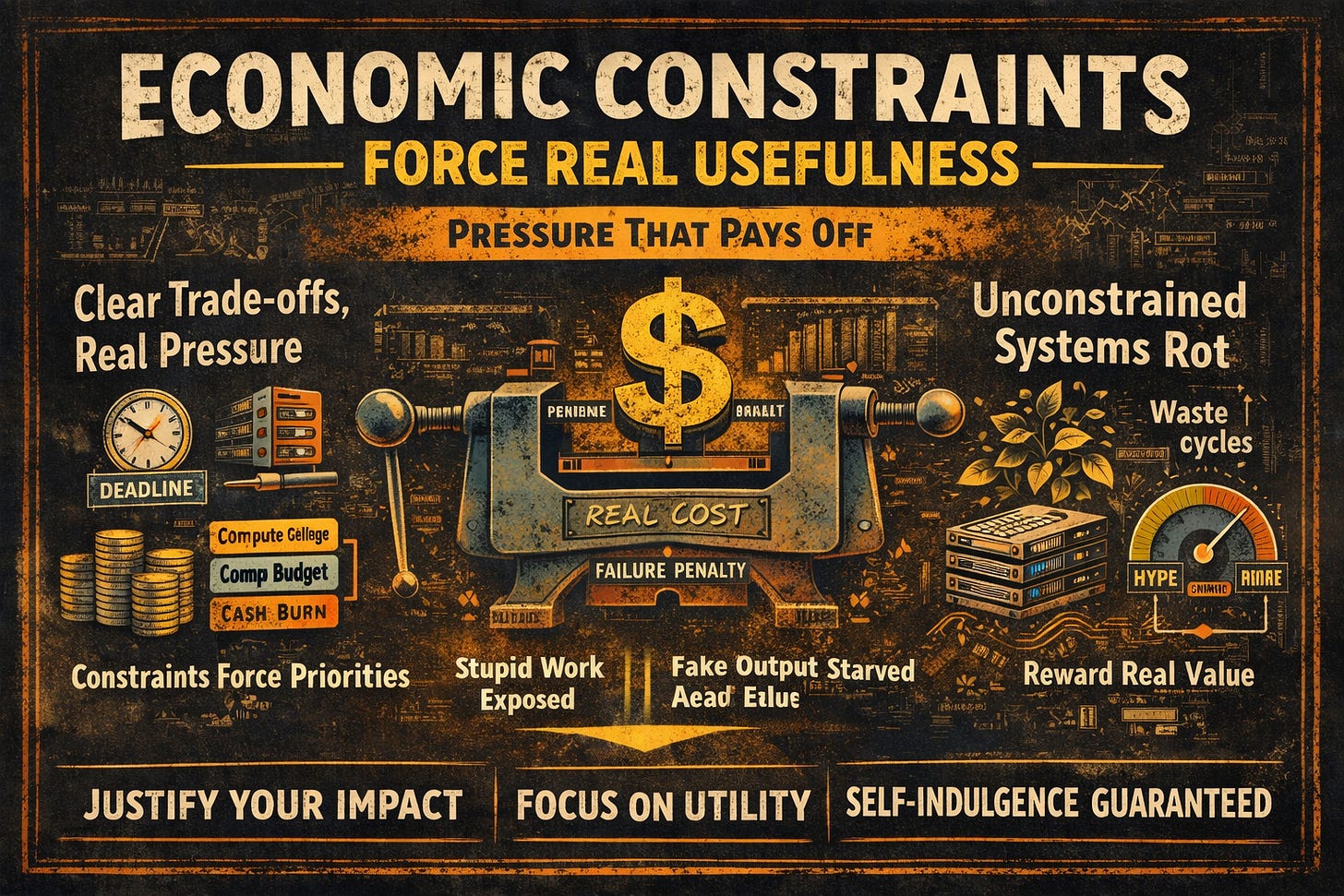

Economic Constraints Force Real Usefulness

If a system doesn't pay rent.

In any system—whether it’s a piece of software, a business model, or an AI agent—if it doesn’t pay some form of rent, it risks drifting into irrelevance or self-indulgence. That “rent” could be compute power, money, time, or even trust. Economic constraints aren’t mere hurdles; they’re the enforcers of genuine usefulness, weeding out the fluff and demanding real value.

At its core, this idea flips the script on constraints. They’re not limitations holding us back—they’re selection pressures that drive evolution. Without them, systems default to optimizing for internal metrics, like code elegance or feature bloat, rather than delivering external value that actually solves problems.

But what do we mean by “economic constraints”? It’s broader than just dollars and cents. Think compute budgets that limit how much processing power you can throw at a task; latency ceilings that demand speed over perfection; memory caps that force efficient design; operator time, where human oversight becomes a precious resource; cognitive load on users, ensuring interfaces stay intuitive; or failure penalties that make mistakes costly enough to avoid. If none of these bite—if there’s no real pain point—the system has zero incentive to improve. It floats in a vacuum, unchallenged and unchanging.

Unconstrained systems inevitably rot from within. They balloon with features that sound impressive but add little utility, chasing internal elegance or novelty for its own sake. They thrive on hype rather than measurable outcomes, surviving because no one questions their existence. Consider LLM tools that demand constant manual babysitting—they’re subsidized by users’ endless patience, not by delivering seamless results. Or open-ended AI agents with no budget, burning through cycles on “interesting” but pointless explorations. Even dashboards that dazzle with visuals but gather dust in operational settings fall into this trap: pretty, but purposeless.

Constraints, on the other hand, act as a reality check. They force ruthless prioritization, asking: What truly matters here? They expose fake work in an instant, stripping away the illusions. And they reward systems that operate efficiently—those that “shut up, act, and get out of the way,” delivering value without unnecessary fanfare.

It’s crucial to note, though, that constraints don’t automatically guarantee quality. They simply apply pressure. True quality emerges only when that pressure aligns with real-world needs. Misaligned constraints can backfire, creating brittle systems that break under stress or optimize for the wrong things.

This is where people often go astray. It’s not true that “more constraints equal a better system”—piling on arbitrary limits just breeds inefficiency. Bad constraints, like overly rigid rules that ignore context, lead to fragility. Good ones, however, map directly to the real costs of failure, ensuring the system is battle-tested against what actually counts.

In closing, if your system can’t fail expensively—in terms of resources, reputation, or results—it will succeed uselessly, existing without purpose. Economic constraints are the gatekeepers of relevance.

(As a companion to my previous piece on determinism, which explores trusting system outcomes, these concepts interlock powerfully. Determinism asks, “Can I trust what happened?” while economic constraints ask, “Should this exist at all?” Together, determinism without constraints yields elegant but trivial toys; constraints without determinism spawn chaotic, unreliable survivors. But when combined, they forge systems that truly earn their place in the world.)

These systems pair determinism (explicit, reproducible if-then rules and auditable logic that ensure the same input always yields the same traceable output) with economic constraints (strict compute budgets, latency limits, token/cost metering for any LLM usage, failure penalties via regulatory fines, and operator time caps).

For instance:

A deterministic core enforces regulatory compliance and repeatability—critical for audit trails under rules like the EU AI Act or financial reporting standards—preventing hallucinations or inconsistent decisions.

Economic pressure (e.g., per-query costs, high failure penalties for misclassifications, and tight inference budgets) forces the system to minimize unnecessary LLM calls, prioritize high-value actions, and “shut up and act” efficiently rather than over-exploring or generating verbose outputs.

Without determinism, the system risks chaotic, untrustworthy outcomes (failing audits or causing financial losses). Without constraints, it becomes an expensive, feature-bloated toy that burns resources on novelty without delivering ROI. Together, they produce battle-tested, production-grade tools: reliable enough for regulated environments, cost-effective enough to scale profitably, and valuable enough to displace manual processes—earning their place by consistently delivering measurable business impact, like reduced fraud losses or faster approvals with near-zero compliance risk.

This hybrid model appears in tools like robotic process automation (RPA) augmented with guarded LLM steps, or clinical/financial decision support systems that layer probabilistic creativity atop rigid, cost-gated rules—proving the interlocking power of trust + enforced usefulness.